คาสิโนออนไลน์

โปรโมชั่นฝากสะสมใน 1 สัปดาห์ รับเครดิตฟรี 2,500 บาท

โปรโมชั่นคืนค่าคอมคาสิโน 0.7% ได้ทุกเทิร์น ไม่มีจำกัด

โปรโมชั่นสมาชิกใหม่ รับสูงสุดถึง100 บาท

เว็บคาสิโนออนไลน์อันดับ 1 เว็บตรง ไม่ผ่านเอเย่นต์

เดี๋ยวนี้ การพนันออนไลน์นับว่าเป็นหนึ่งในทางเลือกของการผลิตรายได้ใน ทำให้มีกรุ๊ปผู้พึงพอใจเข้ามาลงทุนได้กำไรกันไม่น้อยเลยทีเดียว และก็นักลงทุนโดยมากย่อมรังเกียจเสี่ยง พวกเราในฐานะผู้ให้บริการ ก็เลยมีโปรโมชั่น รวมทั้ง โอกาสสำหรับลูกค้าแล้วก็คนที่พอใจเข้ามาลงทุนได้ กระทำการฝึกซ้อม หรือศึกษาแนวทาง รวมทั้งวิธีการเล่น ให้ชำนิชำนาญซะก่อน เป็นการเพิ่มความเชี่ยวชาญรวมทั้งเป็นการเตรียมพร้อมก่อนลงในสนามจริง ยิ่งไปกว่านี้ พวกเรายังมีโปรโมชั่น แจกเครดิตฟรี วันแล้ววันเล่า (ปริมาณจำกัดต่อวัน) สำหรับคนที่บางครั้งอาจจะเรียนมาสุดแท้แต่ไม่มั่นใจที่จะใช้เงินตนเอง สามารถกดรับโปรโมชั่นฟรี ได้ เพราะเว็บ ของพวกเรา เปิดให้บริการมาอย่างช้านานก็เลยไม่มีปัญหา ในเรื่องเกี่ยวกับการให้บริการ ไม่ว่าจะเข้ามาใช้ สำหรับเพื่อการเข้าใช้บริการ สำหรับการเล่น คาสิโนออนไลน์ จำพวกใด ชนิดใด ก็ยืนยันได้หรือมีให้แด่คุณสามารถเลือกเล่น ได้ผ่านทางเว็บของพวกเราได้ทุกหมวดหมู่คาสิโน

ไม่ว่าต้องการจะเล่น คาสิโนออนไลน์ ชนิดใดหรือแบบใด ก็สามารถเลือกเล่นได้ตามความชอบ โดยต้องการจะทดลองเล่นบาคาร่า รูเลตต์ออนไลน์ ไฮโลออนไลน์ ป๊อกกระเด้งออนไลน์หรือสล็อตออนไลน์ ก็สามารถเล่นได้สิ่งเดียวกันรับประกันได้เลยว่า มีความมากมายหลากหลายในเรื่องที่เกี่ยวข้องกับการให้บริการอย่างไม่ต้องสงสัย

นอกเหนือจากความมากมายแล้วนั้น พวกเรายังเป็นเว็บที่มีมาตรฐาน ในเรื่องเกี่ยวกับการให้บริการในสุดยอด เป็น คาสิโนออนไลน์ ได้เงินจริง ไม่ต้องฝาก ก็เลยเชื่อมั่นได้เลยว่าเมื่อเข้ามา วางเดิมพันนั้น จะชำระเงินท่านจริงอย่างแน่แท้ มั่นอกมั่นใจได้ 100% เนื่องจากว่าเว็บของพวกเรา เป็นเว็บคาสิโนออนไลน์ได้เงินจริงอย่างไม่ต้องสงสัย ถ้ากำลังมองหา คาสิโนออนไลน์ เครดิตฟรี 2023 ไม่ต้องฝาก ก็จำเป็นต้องเว็บไซต์แห่งนี้สิ่งเดียวกัน ก็เลยทำให้เว็บของพวกเราเป็นที่นิยม มาอย่างมาโดยตลอดจนกระทั่งในตอนนี้นั่นเอง รับประกันด้วยการเปิดบริการมาอย่างนาน รวมทั้งมีสมาชิกนานาประการกรุ๊ปรวมทั้งมีเยอะมาก

ข้อดีของการเล่น คาสิโนออนไลน์

ในยุคโควิดแบบนี้ เป็นการดีกว่า ที่จะไม่ออกไปข้างนอก เพราะเหตุว่าบางบุคคลต้องการจะเล่นที่บ้าน รังเกียจไปที่บ่อน รังเกียจความอลหม่าน ก็เลยเป็นอีกลู่ทางให้กับคนจำนวนไม่น้อยที่ต้องการจะเล่นพนัน แล้วก็ถูกใจความสบายสบาย ไม่ต้องพกเงินครั้งละมากมายๆไปแลกเปลี่ยนที่บ่อน มันเสี่ยงใช่ไหมละ พวกเราเพียงแค่มีอินเตอร์เน็ต พวกเราก็สามารถเข้าเล่นคาสิโนออนไลน์ผ่านมือถือได้แล้ว ไม่ว่าจะอยู่ตรงไหนของโลก ก็เล่นพนันออนไลน์ได้ ศึกษาเล่าเรียนแนวทางเล่นคาสิโนมาให้ดี จะได้ฝ่าได้เลย

สำหรับสิ่งที่จำเป็นต้องระมัดระวังสำหรับมือใหม่ที่จะเข้ามาเล่นเว็บไซต์คาสิโนออนไลน์ ทั้งหลายแหล่ซึ่งก็คือ การเลือกเว็บไซต์ที่จะเล่นนั่นเอง นับได้ว่าเป็นเรื่องที่สำคัญที่สุด เพราะว่าคนจำนวนไม่น้อย เลือกเว็บไซต์ไม่ถูกนี่ชีวิตเปลี่ยนแปลงเลย เพราะเหตุว่ายังไม่ทันจะเล่น เพียงแค่เพิ่มเติมเงินเข้าเว็บไซต์เพียงแค่นั้นล่ะ ก็ถูกโกงเสียแล้ว หรือเสียเวล่ำเวลาเล่นพนันไปตั้งนาน กลับเบิกเงินมิได้

ด้วยเหตุนี้ ก่อนที่จะเลือกเว็บไซต์ไหน ควรศึกษาค้นคว้าให้ดีซะก่อน อย่าเลือกแบบพบที่ก็เข้าไปเล่นเลย อย่างนั้นอันตราย บางเว็บไซต์เพียงแค่พวกเราเข้าไป ความน่าไว้วางใจก็ไม่มีแล้ว เป็นเว็บไซต์ปกติ ไม่มีอะไรเลย ข้าราชการที่สำหรับติดต่อก็ไม่มี ถ้าพวกเราไม่วางใจก็อย่าเข้าไปเล่นเสียดีกว่า หาเว็บไซต์ที่เปิดมานับเป็นเวลาหลายปี มีคนสารภาพมากมายก่ายกอง หาได้อ่านรีวิวได้จากเว็บไซต์ต่างๆก่อนจะเข้าไปเล่น ซึ่งปัญหาพวกนี้จะหมดไปถ้าหากเข้าเล่นคาสิโนออนไลน์ที่เว็บตรง

เว็บเดียวมีทุกอย่าง

คุณคงไม่อยากยุ่งยากในการตามหาแบรนด์คาสิโนต่างๆ และมันจะเป็นการดีไหม ถ้าหากคาสิโนมีชื่อของโลก รวมไว้มาอยู่ให้เล่นในเว็บไซต์ เว็บไซต์เดียวจบทั้งหมดทุกอย่าง ไม่ต้องแปลงเว็บไซต์เทียวไปเทียวมาไม่ต้องโยกเงินให้ยุ่งยาก ต้องการเล่นบาคาร่าค่ายที่ชอบใจ ได้แก่ AE SEXY , SA Gaming, Venus Casino, eBet หรือ BG Big Casino คุณแค่เพียงกดเข้าไปเล่นได้ในทันที ย้ำอีกครั้งว่าไม่ต้องโยกเงิน

เบื่อๆจากบาคาร่า จะย้ายมาหมุนสล็อตเล่น แค่เพียงกดออกมาจากการเล่นบาคาร่า รวมทั้งเลือกเล่นสล็อต ยิงปลา ค่ายที่ถูกใจ ตัวอย่างเช่น UFA Slot, PG Slot, Joker Gaming, King Maker, Jili, Red Tiger, Spade Gaming หรือ FA CHAI การันตีว่าอารมณ์ไม่สะดุด

ไม่ต้องเสียเวล่ำเวลาล็อกอิน หรือโยกเงินไปๆมาๆให้ปวดศีรษะอีกต่อไป จะพนันบอล แทงหวย จะเล่นคาสิโนไม่ยุ่งยากอีกต่อไป แนวทางเล่นคาสิโนก็มิได้ยุ่งยาก ไม่เคยเล่นมาก่อน ก็ทำความเข้าใจได้อย่างง่ายๆเพียงแค่ไม่กี่นาทีเพียงแค่นั้น ยิ่งกว่านั้นถ้าเกิดปรารถนาสูตรบาคาร่า ที่ใช้ได้กับทุกเครือ ไม่ว่าจะเป็น บาคาร่า888 , คาสิโนออนไลน์777 หรือบาคาร่า1688 ไม่ว่าจะลงท้ายด้วยเลขอะไร ก็ได้ใช้หมดเลย

UFABET เว็บคาสิโนไม่ผ่านเอเย่นต์ มี ค่ายคาสิโนสด ให้เลือกเพียบ

ไม่เฉพาะความหลากหลายของรูปแบบการเดิมพัน ที่มีทั้งการเดิมพันกีฬา แทงบอล แทงมวย เดิมพันไก่ชนปั่นสล็อต เล่นเกมยิงปลา ที่เว็บคาสิโนออนไลน์ UFABETมีค่าย คาสิโนถ่ายทอดสด ให้คุณได้เลือกเล่นหลากหลายค่าย และมีอัปเดทค่ายใหม่ๆ เป็นประจำ

UFA Casino 0.7%

ค่ายคาสิโนออนไลน์ ใหม่ล่าสุด UFA Casino ผู้ให้บริการคาสิโนออนไลน์ที่มีค่าคอมคืนให้ลูกค้า 0.7% ทุกตาเดิมพันและนอกจากความสนุกสนานในการเล่นคาสิโนสดแล้ว ยังมีสาวๆ ไลฟ์สดนำเล่น ที่คุณสามารถพูดคุยโต้ตอบ และให้ของขวัญกับสาวๆ VJ ได้อีกด้วย

AE Sexy คาสิโนเซ็กซี่

เซ็กซี่บาคาร่า ไม่ได้มีแค่บาคาร่าบิกินี่ ให้คุณได้เล่นเท่านั้น แต่ยังมีอีกหลากหลายการเดิมพัน ให้คุณได้เลือกเล่นไม่ว่าจะเป็น เสือมังกร, Sic-Bo, ไฮโลไทย, น้ำเต้าปูปลา และอื่นๆ อีกมากมาย ให้คุณได้สนุกเพลิดเพลินไปกับเกมการเดิมพันพร้อมสาวๆ ดีลเลอร์สุดเซ็กซี่ ทั้งชายและหญิง ที่ไม่ได้มีหน้าที่แค่แจกไพ่ แต่ยังมีลีลาในการแจกไพ่ที่ไม่ซ้ำค่ายไหนอีกด้วย

คาสิโนสด Evolution Gaming

ค่ายคาสิโนถ่ายทอดสดที่ไม่ได้มีแค่ เกมไพ่ เกมลูกเต๋า ที่เราคุ้นเคยบน เว็บยูฟ่าเบท เท่านั้น เพราะว่าค่ายอีโวลูชั่นคาสิโนยังมีรูปแบบการเดิมพันออนไลน์ แบบเกมโชว์ ให้คุณได้ลุ้นร่วมสนุก และทำกำไรตลอด 24 ชั่วโมง ไม่ว่าจะเป็นเกมวงล้อนำโชค, รูเล็ตสายฟ้า, บาคาร่าสายฟ้า รวมไปถึง บิงโกเมกกะบอล ใครที่มองหาเกมเดิมพันที่หลากหลาย คาสิโนไม่ผ่านเอเย่นต์ ค่ายนี้ห้ามพลาดเด็ดขาด

SA Gaming

คาสิโนออนไลน์ อันดับ 1 ตลอดกาล บนเว็บยูฟ่า ที่นอกจากจะมี บาคาร่า เสือมังกร ให้ได้เล่นแล้ว ยังมีเกมไพ่ยอดนิยมอย่าง ป๊อกเด้ง และเกมไพ่อินเดียยอดนิยมอย่าง Teen Patti รวมไปถึง เกมทายไพ่โจ๊กเกอร์ อย่าง อันดาร์ บาร์ฮาร์ ให้ได้เลือกเล่น ผ่านระบบ HTML5 บนมือถืออีกด้วย

Venus Casino

คาสิโนกัมพูชา ที่เต็มไปด้วยดีลเลอร์สาวสวยหลากหลายสไตล์ ไม่ว่าจะเป็นสาวเซ็กซี่บิกินี่ หรือชุดราตรีสวยๆ ก็มีให้เลือก นอกจากนี้แล้วยังมีเกมคาสิโนสดที่น่าสนใจ อย่าง บาคาร่าคลาสสิก สปีดบาคาร่า บาคาร่าประกันภัย เกมไพ่เสือมังกร Sic-Bo และรูเล็ตออนไลน์ เริ่มต้นเดิมพันโดยใช้เงินขั้นต่ำ เพียงแค่ 20 บาท

eBET Casino และ คาสิโน อีเบท 0.7%

อีเบท ผู้ให้บริการ คาสิโนยูฟ่าเบท ที่มีอัตราการเริ่มต้นขั้นต่ำเพียงแค่ ตาละ 10 บาทเท่านั้น นอกจากเกมยอดนิยมยังมีเกมไพ่น่าสนใจ ในรูปแบบของคาสิโนออนไลน์ ไม่ว่าจะเป็นรูปแบบที่มีดีลเลอร์ เป็นคนจริงๆ หรือ เวอร์ช่วลคาสิโน ก็มีให้เลือกเล่น ซึ่งเกมนั้น ก็คือ เกมไพ่แบล็คแจ็ค หรือเกมไพ่ 21 ที่หลายคนรู้จักเป็นอย่างดี หรือเกมไพ่หนิวๆ เกมไพ่ที่น่าตื่นเต้นกว่าบาคาร่า ด้วยการเดิมพันที่สูง และอัตราการจ่ายที่สูงยิ่งกว่า

BG Gaming

คาสิโนถ่ายทอดสด Big Casino ผู้ให้บริการคาสิโนถ่ายทอดสด ที่ได้รับความนิยมเป็นอย่างสูงบน เว็บยูฟ่าเบท ที่ให้คุณสามารถปรับขนาดของชิปการเดิมพันได้ มีเกมไพ่ให้เลือกเล่นหลากหลาย ทั้ง เกมไพ่สามใบ, เกมไพ่วัววัว, ไฮโล, รูเล็ต และเกมไพ่พิเศษ อย่างสปีดบาคาร่า,MI บาคาร่า, บาคาร่าหลากสี ซึ่งเป็นรูปแบบของเกมไพ่ที่คุณอาจจะไม่คุ้นเคย แต่ให้ผลตอบแทนที่คุ้มค่าแน่นอน

World Entertainment Casino

ค่ายเกมคาสิโนน้องใหม่ ที่พึ่งเปิดให้บริการบน เว็บยูฟ่า ได้ไม่นานนัก แต่ WE เป็นค่ายที่มีความแตกต่างที่ระบบการเดิมพัน มีทั้งการเดิมพันกับดีลเลอร์ที่เป็นคนจริงๆ และการเดิมพันกับ AI แต่ที่น่าสนใจมากกว่าสาวๆ ดีลเลอร์ที่ถูกคัดมาเป็นอย่างดีแล้ว World Casino ยังเป็นผู้ให้บริการ คาสิโนสด ที่ใช้บล็อกเชนในการเก็บทุกผลของการเดิมพัน ไม่ว่าไพ่ที่เคยออกไปแล้ว และไพ่ที่ยังไม่ได้ออก โดยจะมีการเข้ารหัสที่ตรวจสอบได้ เพื่อให้คุณมั่นใจในความโปร่งใสของการเดิมพัน

MG Live Casino

อีกหนึ่งห้องเดิมพัน คาสิโนออนไลน์ ถ่ายทอดสดที่สาวๆ ดีลเลอร์เซ็กซี่มากที่สุด แถมยังมีห้องเดิมพัน Hollywood ที่รวมเอาดีลเลอร์สาย ฝ. ที่รับรองได้เลยว่า สาวๆ ที่ทำหน้าที่ในการดำเนินการเดิมพันจะทำให้คุณสนุกสนานมากกว่าเดิมได้อย่างแน่นอน

UFABET เว็บคาสิโนอันดับ 1 มาพร้อม การเดิมพัน ที่หลากหลาย

หลังจากที่ได้รู้จักกับ ค่ายคาสิโน ที่ได้รับความนิยมจากเว็บยูฟ่าแล้ว ที่เว็บตรงยูฟ่า ยังมีหลากหลายเกมพนัน ให้คุณได้เลือกเล่นอีกด้วย ไม่ว่าจะเป็น

บาคาร่าออนไลน์ เกมไพ่อันดับ 1 บน คาสิโนออนไลน์

หากพูดถึง เกมคาสิโน อันดับ 1 ของทุกๆ รูปแบบคาสิโน ไม่ว่าจะเป็น ในบ่อนจริงๆ หรือบนอินเตอร์เน็ต Baccarat เกมไพ่ที่เล่นง่ายมาก เพียงทายว่า ฝั่งเจ้ามือ หรือฝั่งผู้เล่น ใครจะมีแต้มที่ใกล้เคียงกับ 9 มากที่สุด โดยใช้รูปแบบของการนับแต้มแบบเดียวกับการเล่นไพ่ป๊อกเด้ง คือ นับแต้มตามหน้าไพ่ ยกเว้นไพ่พิเศษ อย่าง J, Q, K ที่จะนับเป็น 10 เท่ากัน ซึ่งนอกจาก คลาสสิคบาคาร่า เว็บยูฟ่าเบท ยังมี Baccarat อีกหลายรูปแบบให้คุณได้เล่น ทั้ง

- Super 6 Baccarat

- Speed Baccarat

- MI Baccarat

- Muti Color Baccarat

- Sexy Baccarat

เกมคาสิโนยอดฮิต

ไพ่เสือมังกร Dragon-Tiger

เสือมังกร เกมไพ่ใบเดียว ที่ถูกสร้างขึ้นมาเพื่อแก้ข้อกังขาของการเปิดไพ่ใบที่ 3

โดยใช้การนับแต้มตามหน้าไพ่ เริ่มจาก A = 1 ไปจนถึง K = 13

และใช้กติกาในการเดิมพันเหมือนกับ บาคาร่าออนไลน์ เพียงแต่เปลี่ยนจากการเปิดไพ่เริ่มต้นข้างละ 2 ใบ เป็น เปิดไพ่ฝั่งละ 1 ใบ แล้ววัดกันไปเลยว่า

ฝั่งไหนจะมีแต้มมากกว่ากัน คุณสามารถใช้เงินเริ่มต้นในการ

เล่นเสือมังกรคาสิโน เพียงแค่ตาละ 10 บาทเท่านั้นบนเว็บยูฟ่าเบท

Sic-Bo ไฮโลออนไลน์ เกมลูกเต๋าสากล

อีกหนึ่งรูปแบบของการเดิมพันที่ได้รับความนิยมเป็นอย่างมากบน คาสิโน UFABET ด้วยรูปแบบของเกมที่ใช้เงินเริ่มต้นน้อย เพียงตาละ 10 บาท แทบจะทุกค่าย แต่ที่น่าสนใจมากกว่าเห็นจะเป็นตัวเลือกในการเดิมพัน และอัตราการจ่ายที่สูงถึง 150 เท่าของการเดิมพันหากแทงตองแบบเต็งเลข หรือการเลือกว่าลูกเต๋าจะออกตองที่หมายเลขอะไรอย่างเจาะจง

รูเล็ตต์ (Roulette) วงล้อนำโชค

สำหรับนักเดิมพันมือใหม่ ที่พึ่งเข้ามา หัดเล่นคาสิโนออนไลน์ คุณสามารถเริ่มต้นได้ง่ายๆ กับเกมที่มีตัวเลือกในการเดิมพันมากถึง 40 กว่ารูปแบบ ทั้งการแทงเต็งเลข การแทงแบบควบ การเลือกแทงสี การเดิมพันคู่-คี่ รูเล็ต การเดิมพันแบบเลือกแถว เลือกคอลัมภ์ เรียกได้ว่าสามารถออกแบบการวางเดิมพันได้ด้วยตัวเอง และยังเป็น เกมคาสิโน UFABET ที่ได้รับการบอกปากต่อปากว่าเป็นการ เล่นพนัน ที่มีโอกาสได้มากกว่าเสีย

อันดาร์-บาฮาร์ (Andar-Bahar) เกมไพ่อินเดีย

เรียกได้ว่าเป็นอีกหนึ่งเกมเดิมพันที่น่าจับตามอง สำหรับ ลูกค้าที่มองหา คาสิโนเกมใหม่ เพราะเกมไพ่อินเดียเกมนี้มีรูปแบบการเล่นคล้ายบาคาร่า แต่เปลี่ยนจากการทายแต้มว่าฝั่งไหนจะมากกว่ากัน เป็นการทายว่าไพ่ที่จับขึ้นมาเป็นไพ่ใบหลัก จะถูกเปิดเจอที่ฝั่งไหน โดยในเกมนี้จะใช้ไพ่ 1 สำรับต่อ 1 ตา

เกมไพ่ 3 ใบ Teen Patti

Poker เป็นรูปแบบการเดิมพันที่ได้รับการยกย่องว่าเป็นกีฬาประเภทหนึ่ง เพราะนอกจากคุณจะต้องใช้ดวงในการเดิมพันแล้ว ยังต้องมีการวัดใจกับเจ้ามือ และผู้เล่นในโต๊ะเดียวกันอีกด้วย ซึ่งในส่วนของ เกมไพ่ 3 ใบ หรือทีนแพตตี้ จาก SA Casino, AE Sexy, BIG Gaming และค่ายอื่นๆ ในอนาคต

ป๊อกเด้ง SA Gaming

เกมไพ่ป๊อก 8 ป๊อก 9 ทีคุณเคยเล่นมาตั้งแต่เด็ก สามารถทำเงินให้คุณได้อย่างง่ายดาย เพียงแค่คุณเลือกทายว่าผู้เล่นมือไหน ในเกมจะมีแต้มที่ใกล้เคียงกับ 9 มากที่สุด โดยกำหนดการเปิดไพ่ ทั้งฝั่งลูกขา และเจ้ามือ เพียง ฝ่ายละ 2 ใบ ในทุกๆ มือเท่านั้น หากต้องการลุ้น ไพ่ป๊อกเด้งออนไลน์ สามารถเริ่มเดิมพันได้ขั้นต่ำ ตาละ 20 บาทเท่านั้น บน SA Gaming

UFABET คาสิโนออนไลน์ อันดับ 1 รวมทุกเกมเดิมพัน ในที่เดียว

นอกจาก คาสิโนถ่ายทอดสด ที่มีหลากหลายค่าย และหลากหลายเกมแล้ว ที่ คาสิโน ยูฟ่าเบท ยังมี สล็อตออนไลน์ ให้เลือกเล่นมากกว่า 100 เกม จากผู้ให้บริการ 20 ค่าย ที่พร้อมให้บริการคุณ หากมองหา เกมสล็อตแจ็คพอตแตกง่าย คุณสามารถเริ่มต้นได้ง่ายๆ แบบไม่มีขั้นต่ำ พร้อมลุ้นรับแจ็คพอตจากสล็อตชื่อดังมากมาย ไม่ว่าจะเป็น สล็อตโรม่า, สล็อตสาวถ้ำ และเกมสล็อตเงินล้าน Super UFA, Super Rich เราก็มีให้คุณได้เลือกเล่นตลอด 24 ชั่วโมง แบบไม่มีวันหยุด มองหาเกมสล็อตเงินล้าน แตกจริง โอนจริง UFABET ตอบโจทย์คุณแน่นอน

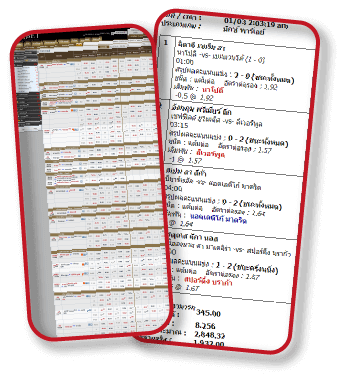

ยูฟ่า คาสิโน แทงบอลเว็บนี้ มีแจกเงินคืน

คอกีฬาไม่ต้องน้อยใจไป เพราะที่ ยูฟ่าคาสิโนออนไลน์ คุณสามารถ แทงบอลเต็ง แทงบอลสเต็ป เดิมพันมวยพักยก แทงมวยเดี่ยว เล่นมวยสากล และเดิมพันกีฬายอดนิยมจากทั่วโลก โดยใช้เงินเริ่มต้นแค่ 10 บาท พร้อมรับเงินคืนสูงสุด 1% สำหรับการเดิมพันในทุกบิลที่เดิมพัน ทันที

เล่นคาสิโน ยูฟ่าเบท ผ่านมือถือ ง่ายกว่า สะดวกกว่า สำหรับทุกคน

หมดกังวลในการเดิมพันผ่านเว็บไซต์ เพราะ UFABET คือ คาสิโนเว็บตรง ไม่ผ่านเอเย่นต์ คุณสามารถเล่นได้ผ่านมือถือ ในทุกระบบ ทุกเครือข่าย ได้ตลอดเวลา ฝาก-ถอน ผ่านระบบออโต้ เดิมพันได้ทุกที่ ทุกเวลา เพียงแค่คุณมีอินเตอร์เน็ต ทางเข้า ยูฟ่าเบท ที่ได้จากทีมงานแอดมินของเราบนช่องทางไลน์ @UFAAE